Newcomb’s problem

|

|

Preface

This was written in 2009 although I’ve known about this one-boxing solution for quite a while. Wittgenstein once said that you can tell that an itch is gone if you no longer find yourself returning to scratch it. This solution did it for me. My target is essentially the epigraph by David Lewis at the start of the essay. Lewis was a famous two-boxer and these words of his encapsulate everything that is wrong with two-box reasoning, in my opinion.

This was written in 2009 although I’ve known about this one-boxing solution for quite a while. Wittgenstein once said that you can tell that an itch is gone if you no longer find yourself returning to scratch it. This solution did it for me. My target is essentially the epigraph by David Lewis at the start of the essay. Lewis was a famous two-boxer and these words of his encapsulate everything that is wrong with two-box reasoning, in my opinion.

This was written in 2009 although I’ve known about this one-boxing solution for quite a while. Wittgenstein once said that you can tell that an itch is gone if you no longer find yourself returning to scratch it. This solution did it for me. My target is essentially the epigraph by David Lewis at the start of the essay. Lewis was a famous two-boxer and these words of his encapsulate everything that is wrong with two-box reasoning, in my opinion.

This was written in 2009 although I’ve known about this one-boxing solution for quite a while. Wittgenstein once said that you can tell that an itch is gone if you no longer find yourself returning to scratch it. This solution did it for me. My target is essentially the epigraph by David Lewis at the start of the essay. Lewis was a famous two-boxer and these words of his encapsulate everything that is wrong with two-box reasoning, in my opinion.Other Sites

Thinking inside the boxes: Newcomb’s problem still flummoxes the great philosophers (2002), by Jim Holt.

Thinking inside the boxes: Newcomb’s problem still flummoxes the great philosophers (2002), by Jim Holt.

www.slate.com

Newcomb’s paradox: an argument for irrationality (2010), by Julia Galef.

Newcomb’s paradox: an argument for irrationality (2010), by Julia Galef.

rationallyspeaking.blogspot.com

Theology throwdown: Newcomb’s paradox (2010), by Jethro Flench.

Theology throwdown: Newcomb’s paradox (2010), by Jethro Flench.

www.thesmogblog.com

Newcomb’s problem divides philosophers. Which side are you on? (2016), by Alex Bellos.

Newcomb’s problem divides philosophers. Which side are you on? (2016), by Alex Bellos.

www.theguardian.com

Thinking inside the boxes: Newcomb’s problem still flummoxes the great philosophers (2002), by Jim Holt.

Thinking inside the boxes: Newcomb’s problem still flummoxes the great philosophers (2002), by Jim Holt.www.slate.com

Newcomb’s paradox: an argument for irrationality (2010), by Julia Galef.

Newcomb’s paradox: an argument for irrationality (2010), by Julia Galef.rationallyspeaking.blogspot.com

Theology throwdown: Newcomb’s paradox (2010), by Jethro Flench.

Theology throwdown: Newcomb’s paradox (2010), by Jethro Flench.www.thesmogblog.com

Newcomb’s problem divides philosophers. Which side are you on? (2016), by Alex Bellos.

Newcomb’s problem divides philosophers. Which side are you on? (2016), by Alex Bellos.www.theguardian.com

Preface

This was written in 2009 although I’ve known about this one-boxing solution for quite a while. Wittgenstein once said that you can tell that an itch is gone if you no longer find yourself returning to scratch it. This solution did it for me. My target is essentially the epigraph by David Lewis at the start of the essay. Lewis was a famous two-boxer and these words of his encapsulate everything that is wrong with two-box reasoning, in my opinion.

This was written in 2009 although I’ve known about this one-boxing solution for quite a while. Wittgenstein once said that you can tell that an itch is gone if you no longer find yourself returning to scratch it. This solution did it for me. My target is essentially the epigraph by David Lewis at the start of the essay. Lewis was a famous two-boxer and these words of his encapsulate everything that is wrong with two-box reasoning, in my opinion.

This was written in 2009 although I’ve known about this one-boxing solution for quite a while. Wittgenstein once said that you can tell that an itch is gone if you no longer find yourself returning to scratch it. This solution did it for me. My target is essentially the epigraph by David Lewis at the start of the essay. Lewis was a famous two-boxer and these words of his encapsulate everything that is wrong with two-box reasoning, in my opinion.

This was written in 2009 although I’ve known about this one-boxing solution for quite a while. Wittgenstein once said that you can tell that an itch is gone if you no longer find yourself returning to scratch it. This solution did it for me. My target is essentially the epigraph by David Lewis at the start of the essay. Lewis was a famous two-boxer and these words of his encapsulate everything that is wrong with two-box reasoning, in my opinion.Other Sites

Thinking inside the boxes: Newcomb’s problem still flummoxes the great philosophers (2002), by Jim Holt.

Thinking inside the boxes: Newcomb’s problem still flummoxes the great philosophers (2002), by Jim Holt.

www.slate.com

Newcomb’s paradox: an argument for irrationality (2010), by Julia Galef.

Newcomb’s paradox: an argument for irrationality (2010), by Julia Galef.

rationallyspeaking.blogspot.com

Theology throwdown: Newcomb’s paradox (2010), by Jethro Flench.

Theology throwdown: Newcomb’s paradox (2010), by Jethro Flench.

www.thesmogblog.com

Newcomb’s problem divides philosophers. Which side are you on? (2016), by Alex Bellos.

Newcomb’s problem divides philosophers. Which side are you on? (2016), by Alex Bellos.

www.theguardian.com

Thinking inside the boxes: Newcomb’s problem still flummoxes the great philosophers (2002), by Jim Holt.

Thinking inside the boxes: Newcomb’s problem still flummoxes the great philosophers (2002), by Jim Holt.www.slate.com

Newcomb’s paradox: an argument for irrationality (2010), by Julia Galef.

Newcomb’s paradox: an argument for irrationality (2010), by Julia Galef.rationallyspeaking.blogspot.com

Theology throwdown: Newcomb’s paradox (2010), by Jethro Flench.

Theology throwdown: Newcomb’s paradox (2010), by Jethro Flench.www.thesmogblog.com

Newcomb’s problem divides philosophers. Which side are you on? (2016), by Alex Bellos.

Newcomb’s problem divides philosophers. Which side are you on? (2016), by Alex Bellos.www.theguardian.com

1. The problem

We never were given any choice about whether to have a million. When we made our choices, there were no millions to be had.

We never were given any choice about whether to have a million. When we made our choices, there were no millions to be had.

Newcomb’s problem is named after the American physicist William Newcomb who discovered it in the 1960s while thinking about the prisoner’s dilemma.

Newcomb’s problem is named after the American physicist William Newcomb who discovered it in the 1960s while thinking about the prisoner’s dilemma.

The Harvard philosopher Robert Nozick wrote an extended essay on the problem in 1969, describing it carefully and isolating the precise difficulty. This seminal paper, ‘Newcomb’s Problem and Two Principles of Choice,’ is widely regarded as the classic introduction to the issue.

Martin Gardner helped popularize the problem through two of his Mathematical Games columns for Scientific American in 1973 and 1974. (He invited Nozick to write the second column.) These lively columns constitute one of the best, short introductions to the problem.

Nevertheless, after forty years, the issue remains largely confined to academic circles and is not as well-known as it deserves to be.

Briefly put, Newcomb’s problem is a decision problem. A certain situation is described and you must decide which of two choices open to you is the better one to make. The trouble is that each choice has something rather convincing to be said for it, which is why some call this Newcomb’s paradox.

Here’s the situation.

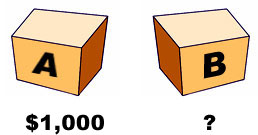

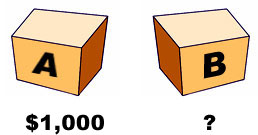

Two boxes, A and B, stand before you. You know that A contains $1,000 for sure, while B contains either $1,000,000 or nothing, though you don’t know which.

You have a choice between taking both boxes or just box B, where you may keep whatever you find in the box or boxes that you take. (The contents of box B will not be revealed until you have made your choice.)

The boxes are sealed and there is no question of their contents being tampered with. Indeed, since, on either choice, you must take box B, you may take it away now for safekeeping, though without opening it yet. The question therefore is whether you want to take box A as well, which you know to contain $1,000. (You may open box A to verify this.)

Why is this a problem? If your sole aim is money, as we may suppose, why not take both boxes and be done with it?

The catch is that the contents of box B depend on what a certain man predicted (beforehand) you would do. This man is a skilled psychologist, say, and known to be very good at anticipating people’s choices. Moreover, if he predicted that you would take just box B, he has left the million dollars in it, but if he predicted that you would take both boxes (or do anything else), he has left box B empty.

His prediction was made yesterday (or even a week beforehand) and the contents of box B have long been settled and may no longer change. You are informed of all this and he knew that you would be so informed.

You have every indication that this man is likely to have predicted your choice correctly, though, of course, there is no way to be absolutely sure of this. While he is a very good predictor, with a very good record of correct predictions, he is known to slip up once in a while. Nevertheless, you tend to believe (with good reason) that he has predicted your choice correctly. Put another way, it’s a good bet that he has.

If your sole aim is money, should you take both boxes or just box B? This is Newcomb’s problem.

– David Lewis, ‘Why Ain’cha Rich?’

The Harvard philosopher Robert Nozick wrote an extended essay on the problem in 1969, describing it carefully and isolating the precise difficulty. This seminal paper, ‘Newcomb’s Problem and Two Principles of Choice,’ is widely regarded as the classic introduction to the issue.

Martin Gardner helped popularize the problem through two of his Mathematical Games columns for Scientific American in 1973 and 1974. (He invited Nozick to write the second column.) These lively columns constitute one of the best, short introductions to the problem.

Nevertheless, after forty years, the issue remains largely confined to academic circles and is not as well-known as it deserves to be.

Briefly put, Newcomb’s problem is a decision problem. A certain situation is described and you must decide which of two choices open to you is the better one to make. The trouble is that each choice has something rather convincing to be said for it, which is why some call this Newcomb’s paradox.

Here’s the situation.

Two boxes, A and B, stand before you. You know that A contains $1,000 for sure, while B contains either $1,000,000 or nothing, though you don’t know which.

You have a choice between taking both boxes or just box B, where you may keep whatever you find in the box or boxes that you take. (The contents of box B will not be revealed until you have made your choice.)

The boxes are sealed and there is no question of their contents being tampered with. Indeed, since, on either choice, you must take box B, you may take it away now for safekeeping, though without opening it yet. The question therefore is whether you want to take box A as well, which you know to contain $1,000. (You may open box A to verify this.)

Why is this a problem? If your sole aim is money, as we may suppose, why not take both boxes and be done with it?

The catch is that the contents of box B depend on what a certain man predicted (beforehand) you would do. This man is a skilled psychologist, say, and known to be very good at anticipating people’s choices. Moreover, if he predicted that you would take just box B, he has left the million dollars in it, but if he predicted that you would take both boxes (or do anything else), he has left box B empty.

His prediction was made yesterday (or even a week beforehand) and the contents of box B have long been settled and may no longer change. You are informed of all this and he knew that you would be so informed.

You have every indication that this man is likely to have predicted your choice correctly, though, of course, there is no way to be absolutely sure of this. While he is a very good predictor, with a very good record of correct predictions, he is known to slip up once in a while. Nevertheless, you tend to believe (with good reason) that he has predicted your choice correctly. Put another way, it’s a good bet that he has.

If your sole aim is money, should you take both boxes or just box B? This is Newcomb’s problem.

Menu

What’s a logical paradox?

What’s a logical paradox? Achilles & the tortoise

Achilles & the tortoise The surprise exam

The surprise exam Newcomb’s problem

Newcomb’s problem Newcomb’s problem (sassy version)

Newcomb’s problem (sassy version) Seeing and being

Seeing and being Logic test!

Logic test! Philosophers say the strangest things

Philosophers say the strangest things Favourite puzzles

Favourite puzzles Books on consciousness

Books on consciousness Philosophy videos

Philosophy videos Phinteresting

Phinteresting Philosopher biographies

Philosopher biographies Philosopher birthdays

Philosopher birthdays Draft

Draftbarang 2009-2025  wayback machine

wayback machine

wayback machine

wayback machine